How to A/B Test Your Hooks: Find What Works for Your Audience

Stop guessing which hook is better. Learn systematic A/B testing methods for TikTok hooks with frameworks, variables to test, and how to analyze results.

Stop guessing which hook is better. Test it.

You write a hook. It feels good. But is it actually good? Or is there a better version you didn't try?

Most creators post their first instinct. Top creators test multiple versions and let data decide.

This guide shows you how to systematically A/B test your hooks — so you know what works for YOUR audience, not what works in theory.

<video src="https://s3.us-east-1.amazonaws.com/remotionlambda-useast1-k5qomcb1pb/renders/otgexxscxa/out.mp4" controls playsinline style="width:100%;border-radius:12px;margin:24px 0;"></video>

Table of Contents

- Why A/B Test Hooks?

- The Hook Testing Framework

- Methods for Testing Hooks

- What to Test: Variables That Matter

- Analyzing Your Results

- Building Your Hook Database

Why A/B Test Hooks? {#why-ab-test}

The Problem with Intuition

Your instinct about your own content is unreliable:

- You're too close to the content

- You know context the viewer doesn't

- What feels clever to you might not register with strangers

- Your audience might respond differently than "general" audiences

The Data Advantage

Creators who test hooks see:

- 2-3x higher average views — because they post winners, not guesses

- Faster learning — patterns emerge from data

- Audience understanding — you learn what YOUR followers respond to

- Compounding improvement — each test makes you better

What Testing Reveals

A/B testing hooks shows you:

- Which emotional triggers your audience responds to

- Optimal hook length for your content

- Whether questions or statements perform better

- Which words/phrases boost performance

The Hook Testing Framework {#testing-framework}

Step 1: Generate Multiple Hooks

For every piece of content, write at least 3-5 hook variations.

Vary by:

- Emotional angle (curiosity vs fear vs excitement)

- Structure (question vs statement vs action)

- Length (short punch vs setup)

- Specificity (vague vs detailed)

Example — Same content, 5 hooks:

- "You've been doing this wrong your whole life"

- "The $5 fix that changed everything"

- "Why does nobody talk about this?"

- "I wish I knew this 10 years ago"

- "3 seconds. That's all it takes."

Step 2: Pre-Test with AI

Before investing time in filming, get AI feedback:

- Score each variation

- Identify strongest candidates

- Get improvement suggestions

- Eliminate weak options

This narrows your test from 5 hooks to the top 2-3.

Step 3: Test in Real Conditions

Methods for real-world testing (covered in detail below):

- Split posting

- Story tests

- Poll testing

- Same-content multi-post

Step 4: Measure & Document

Track results systematically:

- Views at 24h, 48h, 7d

- Retention rate (if available)

- Engagement rate

- Follow-through (follows, profile visits)

Step 5: Apply & Iterate

Use winning patterns for future content. Test again to refine.

Methods for Testing Hooks {#testing-methods}

Method 1: The AI Pre-Test

Best for: Filtering before you film

How it works:

- Write 5+ hook variations

- Test each in Hook Analyzer

- Note scores and feedback

- Film only the top 2-3 scorers

Pros: Fast, free, no posting required Cons: AI prediction isn't perfect; real audience might differ

Method 2: Story/Reel Test

Best for: Quick validation before main post

How it works:

- Post hook A as a Story with "Want to see this video?"

- Post hook B as a separate Story with same question

- Measure responses (replies, polls, sticker taps)

- Use winning hook for the main post

Pros: Real audience data, low stakes Cons: Story audience might differ from FYP audience

Method 3: Split Posting (Different Times)

Best for: Thorough testing when you have patience

How it works:

- Film same content with different hooks

- Post version A

- Wait 48-72 hours, note performance

- Post version B

- Compare results

Pros: Real FYP data for each Cons: Time-consuming, algorithm might vary between posts

Method 4: Multi-Platform Test

Best for: Finding universal winners

How it works:

- Post hook A on TikTok

- Post hook B on Instagram Reels

- Post hook C on YouTube Shorts

- Compare performance across platforms

Pros: More data, platform-specific insights Cons: Audiences differ across platforms

Method 5: Audience Poll

Best for: Direct feedback from followers

How it works:

- Show two hooks (text or video clips)

- Ask "Which would make you watch?"

- Let audience vote

Pros: Direct feedback, engagement boost Cons: What people SAY they'd watch ≠ what they actually watch

Method 6: The Same-Content Repost

Best for: Definitive testing (use sparingly)

How it works:

- Post video with hook A

- If underperforms, delete after 24-48h

- Re-edit with hook B

- Repost

- Compare

Pros: Same content, isolated hook variable Cons: Algorithm might penalize reposts; use rarely

What to Test: Variables That Matter {#what-to-test}

Variable 1: Emotional Angle

Test different emotions for same content:

Curiosity: "You won't believe what happened next" Fear: "This mistake is costing you money" Excitement: "This changes everything" Humor: "I can't be the only one who does this"

Variable 2: Structure

Statement: "Your morning routine is wrong" Question: "Why does your morning feel so hard?" Command: "Stop doing this in the morning" Story start: "So I tried something different this morning..."

Variable 3: Specificity

Vague: "The habit that changed my life" Specific: "The 5-minute habit that cured my insomnia"

Variable 4: Length

Ultra-short: "This. Changes. Everything." Standard: "Here's what nobody tells you about [topic]" Setup: "Everyone says you should do X. I tried the opposite for 30 days."

Variable 5: First Word

The first word matters disproportionately:

Test starting with:

- "You" (personal)

- "I" (story)

- "This" (demonstration)

- "Stop" (command)

- "Why" (question)

- Number (specificity)

Variable 6: Visual First Frame

Even with same words, test:

- Face vs no face

- Close-up vs wide

- Text overlay vs no text

- Action vs static

Analyzing Your Results {#analyzing-results}

Metrics to Compare

Primary:

- Views at 24h

- Average watch time / retention

- Views from FYP percentage

Secondary:

- Engagement rate

- Save rate

- Share rate

- Follow rate

Sample Size Matters

Don't conclude from one test. Look for patterns:

- Does curiosity consistently beat fear?

- Do questions outperform statements?

- Does specificity help or hurt?

The Comparison Framework

For each test, document:

Hook A: [Text]

Hook B: [Text]

Variable tested: [What's different]

Result A: [Views, retention, engagement]

Result B: [Views, retention, engagement]

Winner: [A/B]

Learning: [What this tells you]

Building Your Hook Database {#hook-database}

Why Keep a Database

Over time, your tests reveal patterns specific to YOUR audience. A database lets you:

- Reference winning formulas

- Avoid repeating failed approaches

- Spot trends

- Train your intuition with data

What to Track

For each hook tested, save:

- Hook text

- Content type (tutorial, story, opinion, etc.)

- Score from Hook Analyzer

- Actual performance (views, retention)

- What you learned

Database Template

| Date | Content Type | Hook | AI Score | Views | Retention | Learning |

|------|-------------|------|----------|-------|-----------|----------|

| 2/25 | Tutorial | "Stop doing X" | 8 | 45K | 72% | Commands work |

| 2/25 | Tutorial | "How to do X" | 6 | 12K | 58% | Direct = weak |

Quarterly Review

Every 3 months, review your database:

- What patterns emerged?

- What stopped working?

- What new approaches should you test?

The Testing Mindset

Every video is an experiment. Every hook is a hypothesis.

The creators who win aren't more talented — they're more scientific. They test, measure, learn, repeat.

Start testing today:

- Write 3 hooks for your next video

- Test them in Hook Analyzer

- Post the winner

- Document results

- Repeat

In 30 days, you'll know more about your audience than most creators learn in a year.

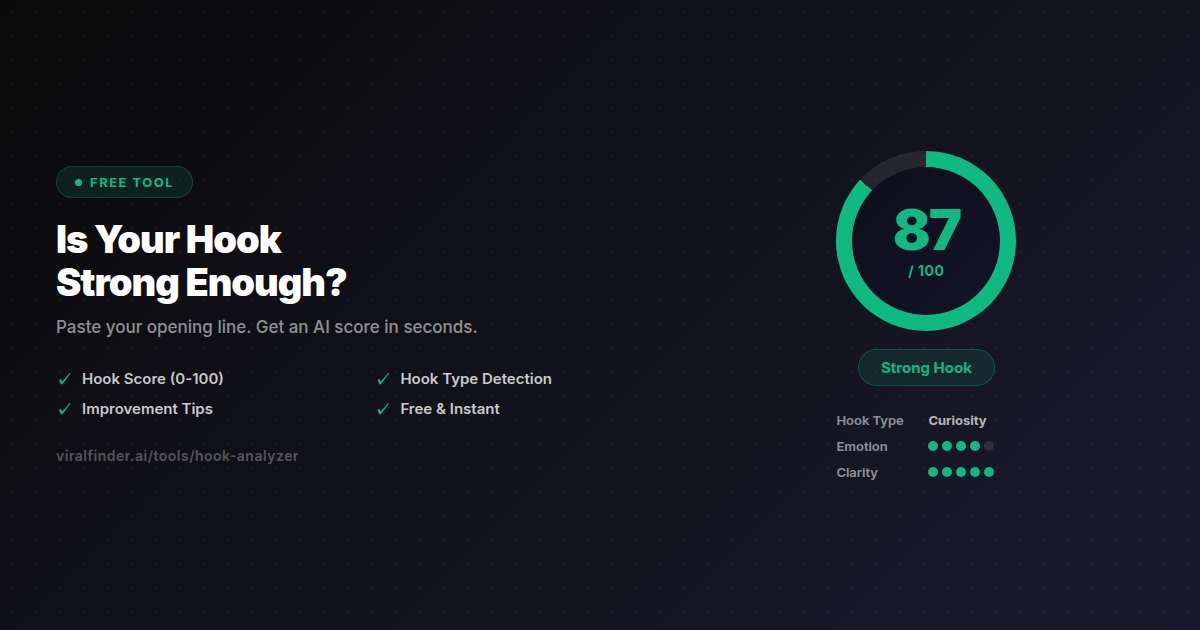

🛠️ Pre-Test Your Hooks

Hook Analyzer — Score multiple hooks before you film. Find the winner in seconds.

📚 Related Posts

- 15 Hook Formulas That Get Millions of Views

- 7 Hook Mistakes That Kill Your Views

- The Psychology of Viral Hooks

Share this article:

Get weekly viral content tips

Join creators who use data to grow faster. Free tips, strategies, and insights in your inbox.

No spam. Unsubscribe anytime.

Is your hook strong enough?

Paste your opening line and get an instant AI score — hook strength, type, and how to make it better. Free, no signup.

Analyze my hook →Try our other free tools